Artificial Audio

The Artificial Audio Group creates new sound experiences through signal processing, machine learning and a understanding of human perception. We are closely collaborating across three universities - FAU, Aalto and York.

Portfolio

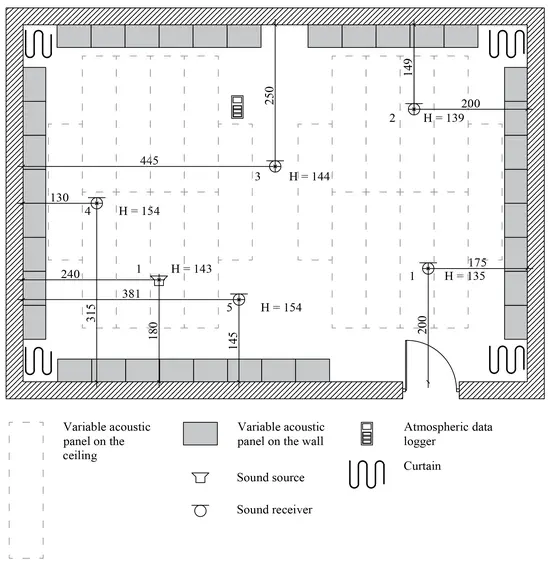

Reverberation Enhancement System

Combining digital signal processing with acoustic feedback to transform the acoustics of any space.

Concept

A reverberation enhancement system is an active system capable of controlling the room acoustics of a physical space. Microphones capture the sound present in the room, a digital signal processor enhances the signals, and loudspeakers reproduce them back into the room. This pipeline simulates changes in the geometry and absorption properties of the original space.

Acoustic Illusions for Extended Realities

Blend real and virtual sounds seamlessly by creating binaural illusions of acoustic sources.

Concept

Augmented and mixed reality systems overlay virtual sound onto the physical world. For the illusion to hold, virtual sources must be acoustically indistinguishable from real ones — a challenge that demands accurate binaural rendering, precise spatial reproduction, and an understanding of human perception.

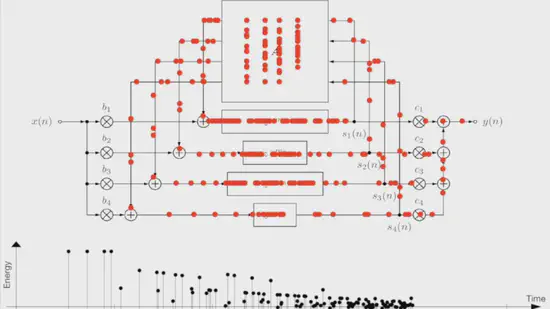

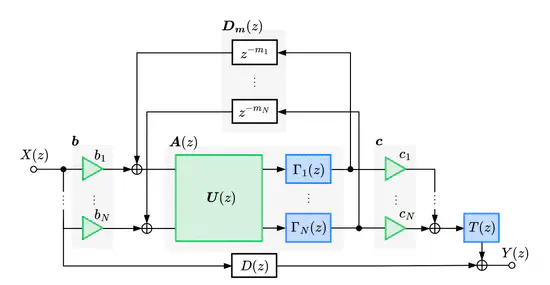

Feedback Delay Networks for Artificial Reverberation

The fastest and most versatile way to add reverberation to sound.

Concept

Feedback delay networks (FDNs) are recursive filters that simulate the complex sound reflections in an acoustic space. A set of delay lines are connected through a feedback matrix, producing dense, natural-sounding reverberation from a compact set of parameters. Since their introduction, FDNs have become the standard building block for real-time artificial reverberators in games, music production, and spatial audio.

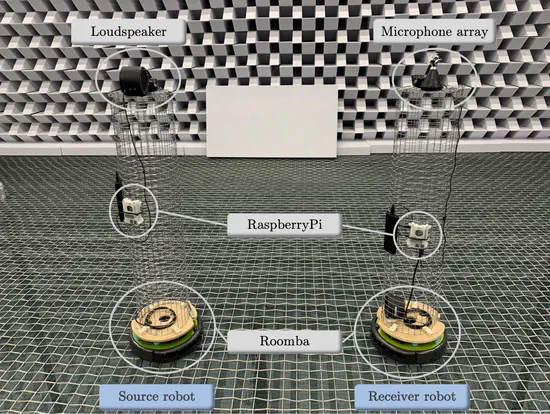

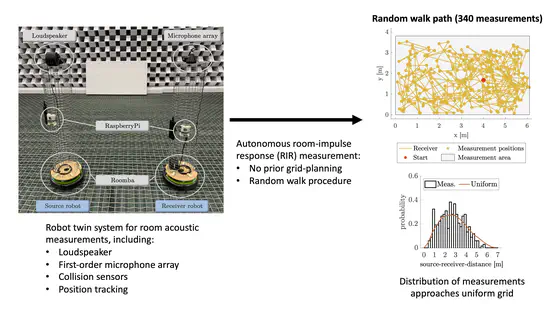

Robust Measurement of Room Acoustics

Collect and clean room impulse responses in noisy and uncontrolled environments.

Concept

Measuring how a room sounds — its reverberation, reflections, and spatial characteristics — is fundamental to acoustics research, architectural design, and audio production. But real-world measurements are messy: background noise, non-stationary disturbances, and equipment limitations corrupt the signals we rely on.

Similarity of Sound

Computational measures of perceptual similarity of sound.

Concept

How do we quantify whether two sounds are “similar”? This question arises everywhere in audio research — from evaluating whether a synthetic reverb matches a measured room, to interpolating between spatial impulse responses, to assessing whether rendering artifacts are perceptible.

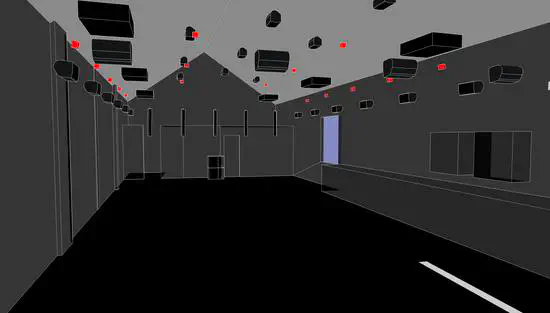

Spatial Audio & Room Transitions

Navigate through acoustic environments with six degrees of freedom.

Concept

Real spaces are not isolated boxes — rooms connect through doorways, corridors, and open plans, and listeners move freely through them. Reproducing this experience in virtual and augmented reality requires rendering spatial audio that evolves naturally as the listener walks, turns, and transitions between coupled acoustic environments.

Differentiable Audio Processing & Deep Learning

Bridging classical signal processing with modern machine learning for audio.

Concept

Classical audio signal processing offers transparent, interpretable algorithms — but tuning their parameters to match complex acoustic targets remains an open challenge. Deep learning brings powerful optimization, but often at the cost of interpretability and efficiency.

Courses

Virtual Acoustics Lab

Simulate and measure acoustic environments virtually — room acoustics, auralization, and immersive audio for extended realities.

Stochastic Processes

Mathematical framework for modeling random phenomena in time, with applications to various signal processing including sound, image, music and language.

Statistical Signal Processing

Fundamental techniques for analyzing and processing signals with statistical methods, including estimation theory, detection, and adaptive filtering.

Music Processing & Synthesis

Digital signal processing techniques for music analysis, synthesis, and transformation — from classic synthesis methods to modern neural audio.

Coding Virtual Worlds

Program interactive virtual environments with spatial audio, 3D graphics, and real-time signal processing for immersive experiences.