Acoustic Illusions for Extended Realities

Blend real and virtual sounds seamlessly by creating binaural illusions of acoustic sources.

Concept

Augmented and mixed reality systems overlay virtual sound onto the physical world. For the illusion to hold, virtual sources must be acoustically indistinguishable from real ones — a challenge that demands accurate binaural rendering, precise spatial reproduction, and an understanding of human perception.

Our research investigates when and why listeners accept virtual sounds as real, developing evaluation paradigms and rendering techniques that push the boundaries of auditory plausibility.

Transfer-Plausibility

We developed the concept of transfer-plausibility[7][13]: a rigorous framework for evaluating whether virtual sources are accepted as real when both real and virtual sounds coexist. This goes beyond traditional authenticity testing and captures the perceptual demands unique to AR/MR scenarios. Our 3AFC transfer-plausibility test proved more sensitive than alternative evaluation methods, establishing it as a standard for AR audio research.

Binaural Rendering for 6 Degrees of Freedom

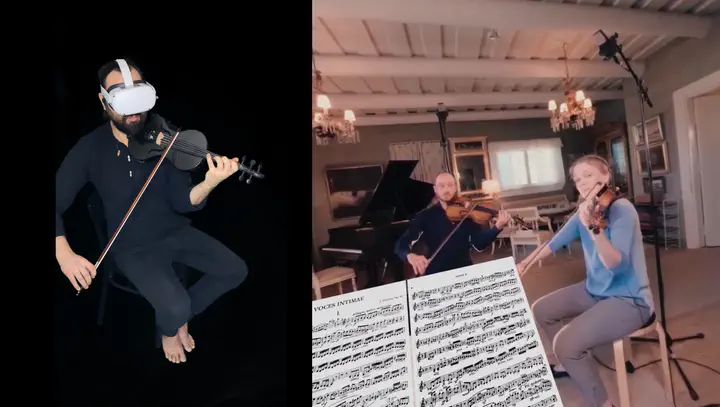

Rendering spatial audio for listeners who can freely move and rotate in a space requires processing recorded Ambisonics sound fields with distance and position information[1][2]. Our work addresses source distance modeling and listener navigation through measured sound fields, enabling experiences like Inside the Quartet — an immersive experience placing the listener inside a string quartet[10].

Code: SPARTA 6DoFconv — plugin for six-degrees-of-freedom convolution with spatial room impulse responses.

Code: SRIR Interpolation Toolkit — perceptually informed interpolation of spatial room impulse responses between measurement positions.

Latency & Perceptual Thresholds

Low-latency processing is critical for maintaining the auditory illusion in real-time AR. We characterized the latency limits of head-tracked binaural rendering systems and their impact on plausibility[9], providing practical guidelines for system design.

Code: Latency Analyzer — tools for measuring binaural rendering latency.

Head-Worn Device Transparency

Wearing headphones or AR glasses disrupts the perception of real sounds. We developed methods for predicting perceptual transparency of head-worn devices[8], informing the design of passthrough processing that preserves natural listening.

Audiovisual Congruence

How do visual cues interact with spatial audio? We studied whether loudspeaker models or human avatars in VR affect localization performance[11], revealing the interplay between visual representation and spatial hearing accuracy.

Room Acoustic Memory

Can listeners remember and compare the acoustic character of spaces? Our experiments[7] investigate how accurately listeners retain room acoustic impressions, informing how quickly AR systems must adapt when transitioning between environments.

Experiences

Inside the Quartet — immersive spatial audio placing the listener inside a string quartet, demonstrating high-quality binaural rendering for musical performance[10].

Space Walk — a navigable virtual planetarium for the Oculus Quest with spatialized music[4], combining stereophonic and immersive sound spatialization.