Similarity of Sound

Computational measures of perceptual similarity of sound.

Concept

How do we quantify whether two sounds are “similar”? This question arises everywhere in audio research — from evaluating whether a synthetic reverb matches a measured room, to interpolating between spatial impulse responses, to assessing whether rendering artifacts are perceptible.

Our research develops metrics and methods for comparing sounds and acoustic environments in perceptually meaningful ways, bridging the gap between signal-level differences and what listeners actually hear.

Similarity Metrics for Late Reverberation

We developed and compared computational metrics that capture perceptual similarity between reverberant sound fields[9], going beyond simple energy-based measures to account for spectral, temporal, and spatial structure. These metrics enable objective evaluation of reverberation rendering quality.

Code: similarity-metrics-for-rirs — Python toolkit for computing and comparing similarity metrics for room impulse responses.

Demo: Reverb similarity listening examples — audio comparisons with different metrics.

Optimal Transport for Audio

Optimal transport theory provides a principled mathematical framework for comparing distributions. We apply it to quantify distances between time-frequency representations of audio signals[10] and to interpolate between spatial room impulse responses[6], yielding smooth, perceptually meaningful transitions between acoustic environments.

Code: SRIR Interpolation via Optimal Transport — perceptually informed interpolation of spatial room impulse responses using partial optimal transport.

Source Signal Similarity & Room Perception

How does the source signal itself affect our ability to hear differences between rooms? We investigated how the similarity of source material — speech, music, noise — influences listeners’ ability to distinguish between different positions in a room[8], revealing that source characteristics interact strongly with spatial perception.

Perceptual Roughness

Sparse noise signals used in efficient spatial audio rendering can introduce roughness artifacts. We quantified the perceptual roughness of spatially assigned sparse noise[2][7], establishing the thresholds where rendering artifacts become audible and guiding the design of velvet noise reverberators.

Demo: Velvet noise roughness analysis — frequency-dependent temporal roughness of velvet noise, with listening examples.

Room Acoustic Memory & Room Transitions

How accurately do listeners remember and compare room acoustics? Our experiments reveal the limits of acoustic memory[3][6], directly informing the design of systems that transition between coupled rooms. This includes work on the perceived aperture position during room transitions[4] and the perceptual analysis of directional late reverberation[1].

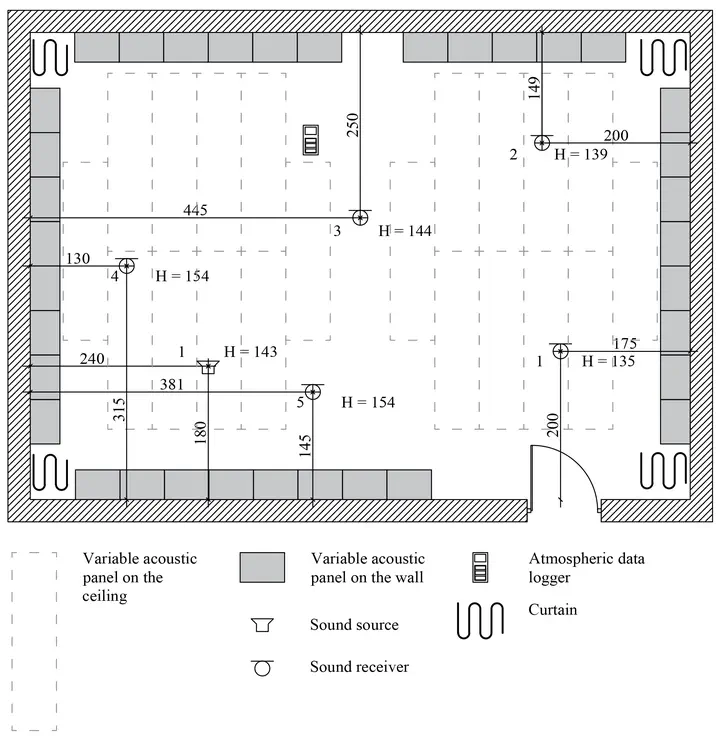

Data: Room transition datasets — measured acoustic data of transitions between coupled rooms.

Demo: Where you are in a room — interactive exploration of room position perception.