Spatial Audio & Room Transitions

Navigate through acoustic environments with six degrees of freedom.

Concept

Real spaces are not isolated boxes — rooms connect through doorways, corridors, and open plans, and listeners move freely through them. Reproducing this experience in virtual and augmented reality requires rendering spatial audio that evolves naturally as the listener walks, turns, and transitions between coupled acoustic environments.

Our research tackles the full pipeline: from measuring and modeling the acoustics of connected spaces, to rendering smooth room transitions, to evaluating whether the result sounds convincing to listeners.

Room Transitions in Coupled Spaces

When sound travels between connected rooms, energy initially increases before decaying — creating a convex energy decay curve rather than the typical concave shape. We characterized this fade-in phenomenon[2][3] and developed auralization methods[5][9] for smooth transitions between coupled acoustic environments, including real-time rendering[11].

Code: fade-in-reverb — implementation of fade-in reverberation for multi-room environments using the common-slope model.

Demo: Fade-in reverb examples — audio demonstrations of room transitions.

Data: Room transition datasets — measured acoustic data of transitions between coupled rooms.

Common-Slope Late Reverberation Model

The common-slope model[8] decomposes late reverberation into shared decay slopes with direction-dependent amplitudes, enabling efficient, physically motivated rendering of complex reverb fields. Its extension to coupled rooms[7] and acoustic radiance transfer[12] provides a complete framework for spatial rendering in multi-room environments.

Code: CommonSlopeAnalysis — MATLAB toolkit for common-slope analysis of late reverberation.

Demo: Common-slope listening examples — interactive demonstrations of the model.

Demo: Dynamic rendering — real-time common-slope rendering for games and VR[10].

Spatial Room Impulse Response Rendering

Reproducing measured spatial room impulse responses (SRIRs) with full 6DoF listener movement requires interpolation between sparse measurement positions and anisotropic multi-slope resynthesis[4]. We developed methods for resynthesizing SRIR tails that preserve directional decay characteristics lost in conventional processing.

Code: Directional Multi-Slope RIR Resynthesis — anisotropic multi-slope decay envelope resynthesis.

Demo: Resynthesis listening examples — audio comparisons.

Code: SRIR Interpolation Toolkit — perceptually informed interpolation of SRIRs between measurement positions.

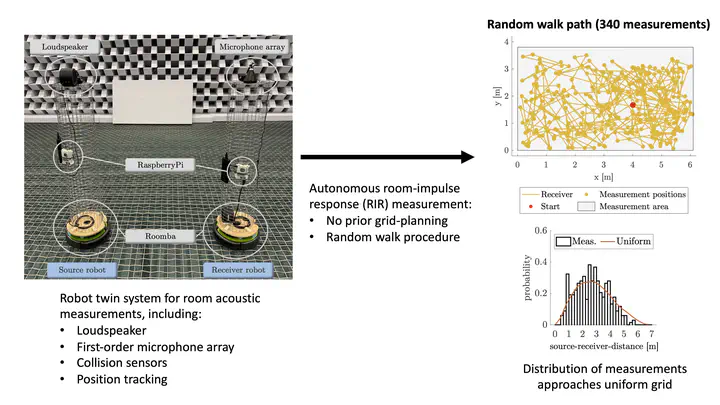

Autonomous Room Measurement

The ARTSRAM robot twin system[4a] uses autonomous Roomba-based platforms with loudspeakers and microphone arrays to collect hundreds of room impulse response measurements through random walk procedures — no prior grid planning required.

Demo: ARTSRAM project page — measurement setup and results.

Velvet Noise for Spatial Reverberation

Sparse pulse sequences (velvet noise)[6] provide an efficient basis for multichannel reverberation rendering. Dark velvet noise extends this to non-exponential decay modeling, and our perceptual studies establish quality thresholds for when rendering artifacts become audible.

Demo: Multichannel velvet noise rendering — multichannel interleaved velvet noise.

Demo: Velvet-noise FDN — spatial reverberation with velvet noise.