downloading Olivetti faces from https://ndownloader.figshare.com/files/5976027 to /home/runner/scikit_learn_data

Each grayscale face image of size {64 \times 64} is flattened into a vector \mathbf{x} of size {4096 \times 1}. So, \mathbf{x}^{(i)} are samples from the joint distribution of the random vector \mathbf{X}.

downloading Olivetti faces from https://ndownloader.figshare.com/files/5976027 to /home/runner/scikit_learn_data

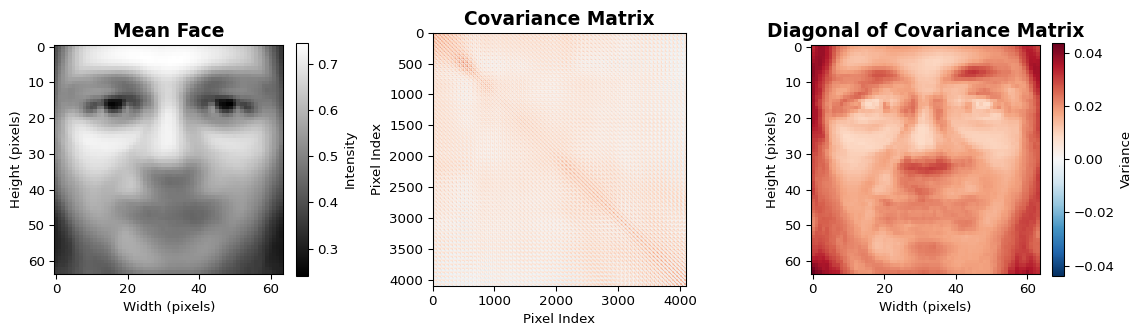

The mean face and covariance matrix are computed as

\mathbf{m} = \frac{1}{N} \sum_{i=1}^N \mathbf{x}^{(i)},

\quad

\mathbf{C} = \frac{1}{N-1} \sum_{i=1}^N (\mathbf{x}^{(i)} - \mathbf{m})(\mathbf{x}^{(i)} - \mathbf{m})^T.

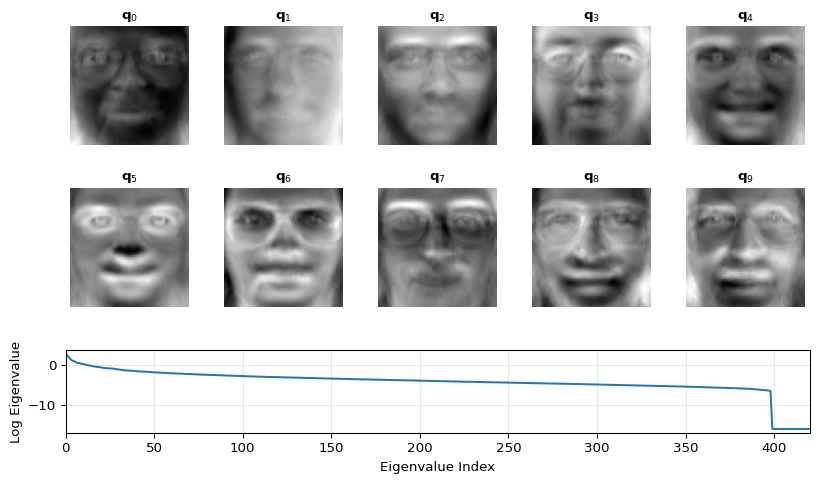

An eigendecomposition \mathbf{C}_{\mathbf{XX}} = \mathbf{Q} \mathbf{\Lambda} \mathbf{Q}^H yields eigenvectors \mathbf{Q} = [\mathbf{q}_1, \dots, \mathbf{q}_N], the eigenfaces, and eigenvalues \mathbf{\Lambda} representing the variance captured by each component.

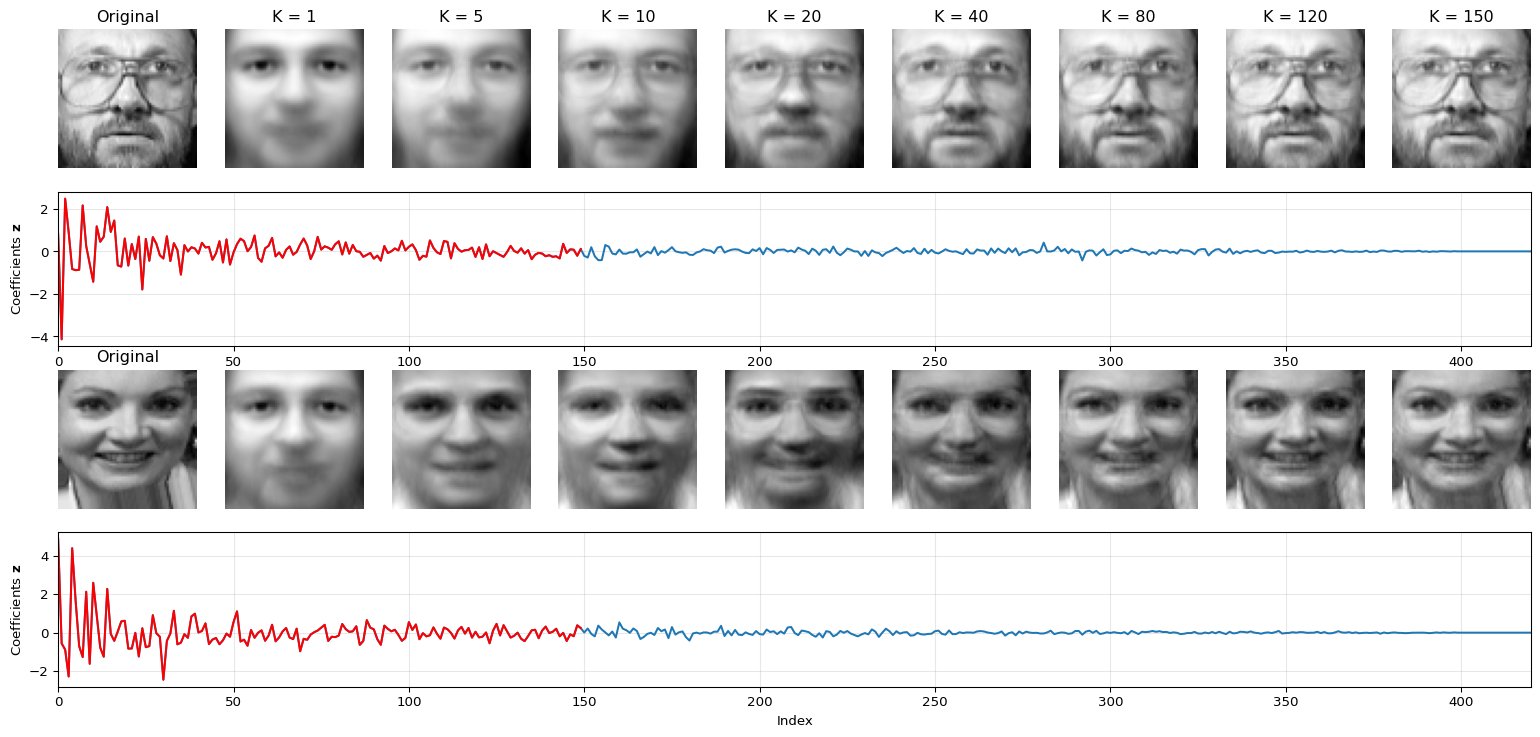

Using the Discrete Karhunen-Loéve Transform (DKLT), the face images can be compressed for storage or transmission. Each face \mathbf{x} is represented in the eigenface basis and can be reconstructed partly (K \ll M) \mathbf{z} = \mathbf{Q}^H (\mathbf{x} - \mathbf{m}), \quad \hat{\mathbf{x}} = \mathbf{m} + \sum_{k=1}^{K} z_k \mathbf{q}_k

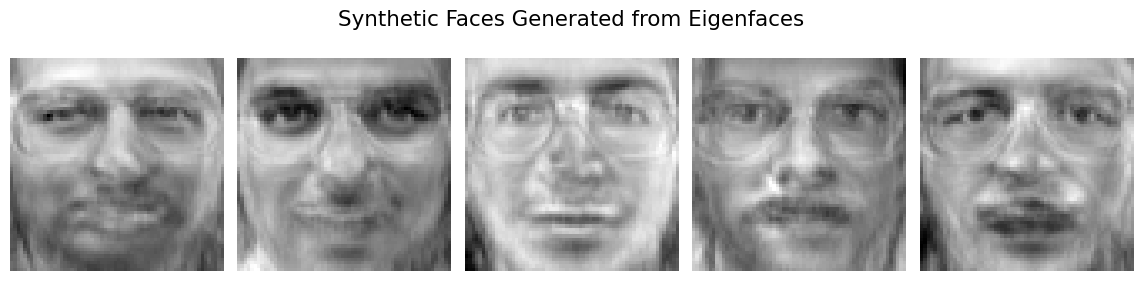

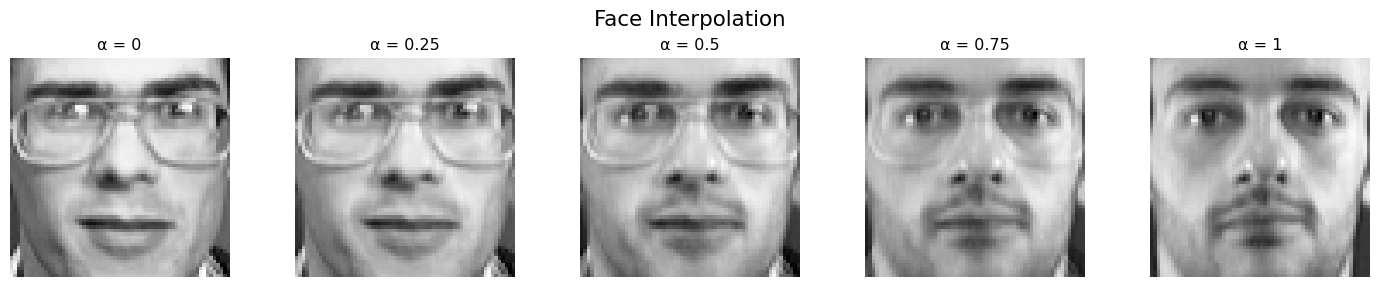

By sampling new features \mathbf{z} from \mathcal{N}(0,\Lambda), or interpolating them between two existing ones \mathbf{z}(t) = t \mathbf{z}^{i} - (1-t)\mathbf{z}^{j}, we can generate new faces.

The eigenfaces are not translation-invariant (at all), while natural images are randomly cropped. Therefore, natural image covariance matrix approximate block Toeplitz structure (based on pixel distance). Compute those based on CIFAR. The basis functions are very similar to DCT.