Minimum Mean Square Error Estimator

Here, we give some examples on the MMSE estimator.

MMSE without Observation

When no observation is available and we estimate a random variable Y by a deterministic constant \hat{Y}, the MMSE estimator minimizes the cost J(\hat{Y}) = \mathcal{E}\{(Y - \hat{Y})^2\} .

Expanding the square gives J(\hat{Y}) = \mathcal{E}\{Y^2 - 2Y\hat{Y} + \hat{Y}^2\} = \mathcal{E}\{Y^2\} - 2\hat{Y}\mathcal{E}\{Y\} + \hat{Y}^2 , Differentiating with respect to \hat{Y} yields \frac{d}{d\hat{Y}} J(\hat{Y}) = -2 \mathcal{E}\{Y\} + 2\hat{Y} = 0 \quad \rightarrow \quad \hat{Y}_{\mathrm{MMSE}} = \mathcal{E}\{Y\}

MMSE with Observation

For an observation X and a random variable Y, the MMSE estimator seeks a function \hat{Y}(X) that minimizes the mean‐squared error J(\hat{Y}) = \mathcal{E}_{X,Y}\{(Y - \hat{Y}(X))^2\}.

Using the law of total expectation, we separate integration over X and over the conditional distribution of Y given X: J(\hat{Y}) = \mathcal{E}_{X}\Big[ \mathcal{E}_{Y\mid X}\{(Y - \hat{Y}(X))^2 \mid X\} \Big].

Inside the inner expectation, X is fixed and \hat{Y}(X) is a constant, so minimizing \mathcal{E}_{Y\mid X}\{(Y - \hat{Y}(X))^2 \mid X\} yields \hat{Y}_{\mathrm{MMSE}}(X) = \mathcal{E}_{Y\mid X}\{Y \mid X\}.

Thus, when an observation is available, the MMSE estimator of Y is the conditional expectation of Y given X.

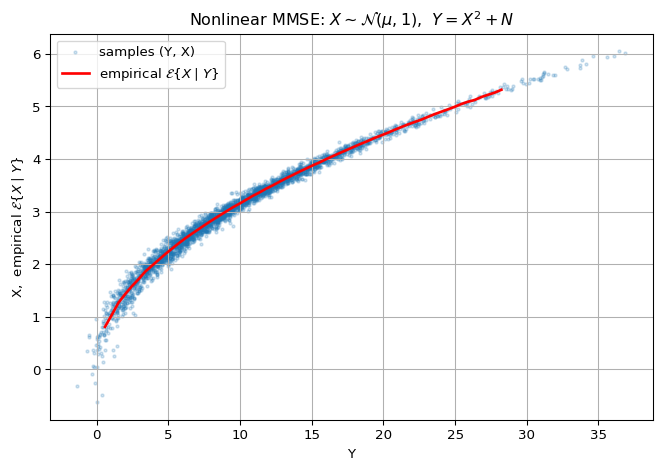

Nonlinear MMSE Example: Quadratic Measurement Model

Model Let the latent signal be X \sim \mathcal{N}(\mu = 3,1) and the observation be Y = X^2 + N, \qquad N \sim \mathcal{N}(0,\sigma^2), with X and N independent.